Why it matters:

AI-RAN has often been framed as a future-looking convergence story across radio, compute and orchestration. SoftBank is now tying it to something more tangible: Physical AI, where vision-language models interpret the environment and vision-language-action systems turn those decisions into motion. In SoftBank’s framing, the network edge is not just a better place to host inference. It becomes part of the control loop.

The big picture:

This is part of a broader repositioning. At MWC26, SoftBank said it is shifting from a traditional carrier toward an “AI-native infrastructure provider,” with a Telco AI Cloud vision that turns communications infrastructure into an active compute platform across edge and cloud. Physical AI sits near the center of that story.

What SoftBank is actually saying:

Its recent research posts argue that increasingly capable robots will not be able to rely only on onboard compute, while cloud-only processing introduces too much latency and variability. SoftBank’s answer is AI-RAN-based MEC: offload higher-level perception and task decomposition to infrastructure near the base station, while leaving motion-critical execution on the robot itself.

How the architecture works:

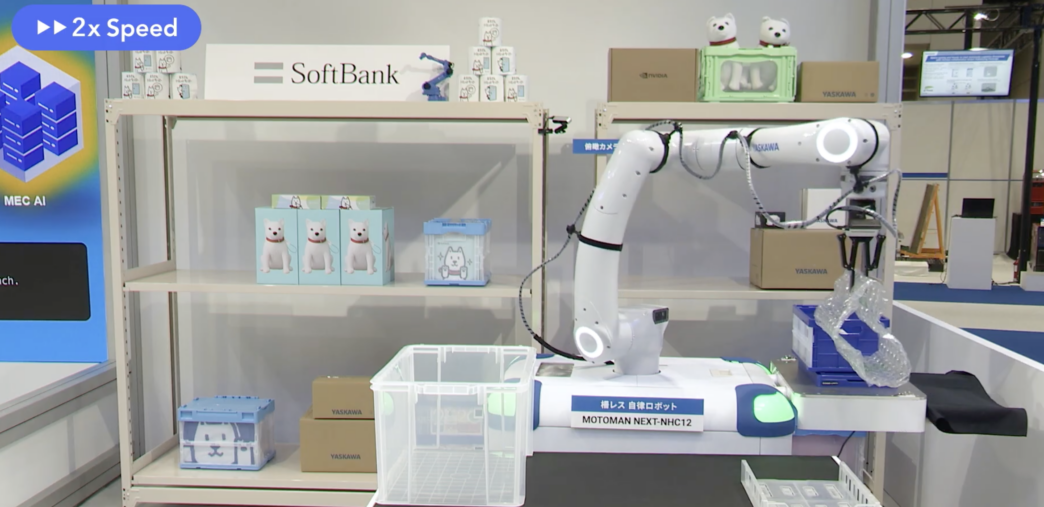

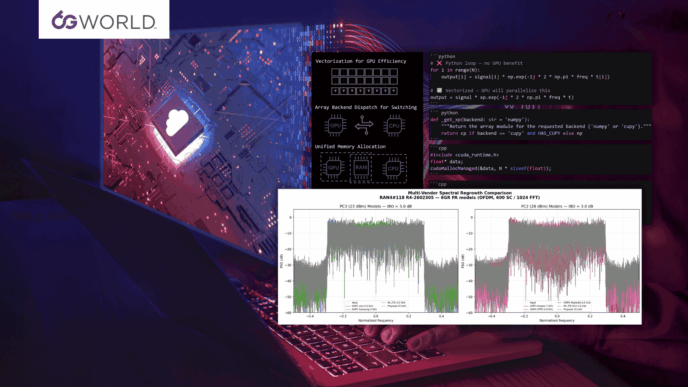

SoftBank describes a split model. A VLM running on MEC takes in camera feeds and task information, then identifies what should be picked and where it should be placed. A VLA running on the robot handles the action layer, including grasping and motion generation, where responsiveness and safety matter most. That is a more interesting AI-RAN proposition than generic “distributed AI,” because it maps compute placement directly to operational constraints.

Where it gets real:

The strongest part of the story is that SoftBank is not keeping this at the concept-video level. In a logistics warehouse validation with Yaskawa Electric, it tested Physical AI on real picking and placement tasks involving smartphones and accessories in unstructured boxes, where the robot had to decide what to pick first, how to grasp it, and how to place it. That is a more credible proving ground than a clean demo environment because the task conditions change with product mix and cannot be fully captured by fixed rules.

Between the lines:

SoftBank is effectively arguing that future telecom infrastructure will not just connect robots. It will help think for them. More specifically, it is trying to make AI-RAN relevant to industrial automation, warehouse operations, and collaborative robotics by showing that networked edge compute can upgrade robot capability without replacing robot hardware every time the AI model improves.

That matters for telecom because:

If this model scales, operators and infrastructure providers could have a stronger claim in the emerging Physical AI stack. Not as passive connectivity suppliers, but as providers of low-latency inference environments, training feedback loops, and orchestration fabrics that link communications with compute. SoftBank explicitly says its infrastructure combines MEC inference with GPU-based training so field data can be fed back into model improvement.

What to watch:

The open question is whether this remains a SoftBank-specific integration story around AITRAS, AI-RAN and selected partners, or whether it becomes a broader industry pattern. Either way, SoftBank is pushing an important idea: AI-RAN will have a better chance of mattering if it is attached to a hard operational problem. Physical AI may be one of the clearest candidates yet.