Produced in partnership with Keysight Technologies

The public conversation around 6G still swings too easily between hype and dismissal. Neither helps much. A more grounded reading is that the agenda is becoming clearer just as the work becomes harder. ITU’s IMT-2030 framework already makes that visible: six usage scenarios, 20 minimum technical performance requirements, and a much broader target than simply faster wireless. The implication is straightforward. The burden of proof is rising.

That is why 6G should not be read as a single air-interface invention waiting to be unveiled. In 3GPP, the process is unfolding in stages. Release 20 is the study phase for 6G, while Release 21 is expected to carry the first normative 6G work. The formal studies underway are therefore not about refining a finished blueprint. They are about narrowing a candidate set of technical directions and determining which ones deserve to become part of the standardization path.

This is also why the real story is not “6G versus 5G.” The real story is that early 6G is forcing a more explicit co-design of the PHY and the wider system. Public positions from the standards community and major vendors increasingly converge around the same themes: stronger coverage, lower complexity, simpler migration, tighter terrestrial and non-terrestrial integration, broader but more disciplined AI support, and better energy and spectrum efficiency. That is less a clean-slate narrative than a systems correction to what 5G made possible, but also complicated.

6G PHY is becoming a co-design challenge

That shift matters most at the PHY layer. In earlier cycles, it was easier to treat waveform design, coding, beamforming, mobility, and network architecture as partially separate conversations. That separation is becoming much less defensible. The emerging 6G problem is not just to improve one block inside the radio chain. It is to make interacting design choices work together under realistic constraints on energy, coverage, complexity, spectrum, implementation, and deployment.

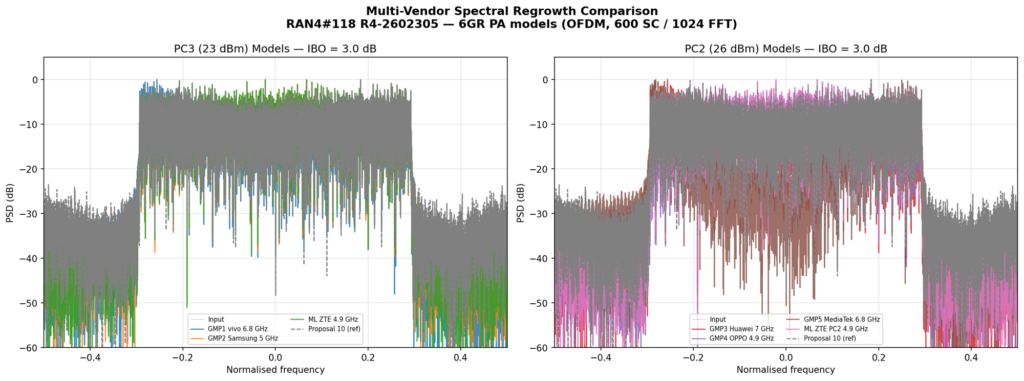

That is what makes the current moment different. The industry is no longer asking only whether an idea is novel. It is asking whether an idea still holds once the full engineering context is applied. This matters especially at PHY, because many of the hardest trade-offs appear there first: power amplifier behavior, uplink coverage, decoder complexity, duplexing feasibility, antenna scaling, channel realism, and the question of whether AI belongs inside a procedure or merely next to it.

Table 1. Reframing 6G PHY

| Question | Weak framing | Serious framing |

| What is 6G? | A faster or more AI-heavy successor to 5G | A broader redesign point where radio, architecture, AI, sensing, spectrum, and migration are being aligned |

| What matters at PHY? | A single breakthrough mechanism | A set of interacting trade-offs across waveform, coding, duplexing, beamforming, coverage, and complexity |

| How should AI be used? | As a vague promise of intelligence | In bounded procedures where overhead, robustness, or prediction can be improved under explicit metrics |

| What makes a proposal credible? | A clean gain on one chart | A result that still holds when coverage, complexity, migration, and standards context are considered together |

| What is the migration path? | Replace 5G with 6G | Evolve from 5G SA and 5G-Advanced toward a simpler, more unified 6G architecture |

| What should industry buyers look for? | Peak technical ambition | Day-one operational value, manageable complexity, and a believable standardization and deployment path |

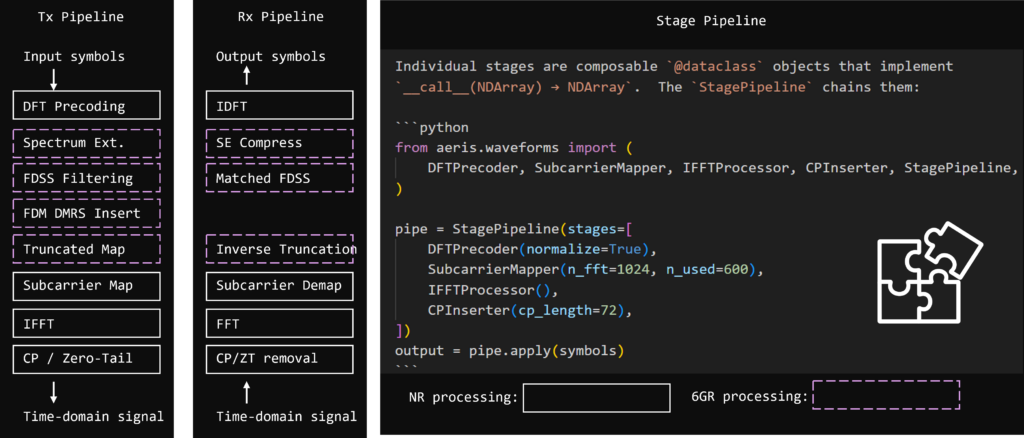

Waveforms are still central, but 6G is rewarding discipline over disruption

One of the clearest signs of maturity in early 6G work is that waveform discussions are no longer driven by novelty alone. The more relevant question is whether a candidate approach improves uplink behavior, coverage, spectral efficiency, and implementation realism without creating unnecessary disruption in the wider radio architecture.

That is why the most credible direction today looks more evolutionary than revolutionary. Public industry framing increasingly points to continuity where continuity still makes engineering sense, especially when the goal is to improve usable performance across existing and emerging spectrum rather than pursue a clean break for its own sake. In practice, that puts more emphasis on power-limited operation, uplink behavior, hardware realism, and deployable gains than on elegant but isolated waveform concepts.

Read through that lens, waveform evolution becomes a test of engineering discipline. The strongest candidates are unlikely to be the most radical ones. They are more likely to be the ones that preserve enough continuity with NR while delivering measurable gains in the conditions that now matter most: broader coverage, stronger uplink economics, improved efficiency, and a more credible path through standardization and deployment.

Coding is no longer a narrow performance story

The same pattern appears in coding. In 6G, coding is no longer a narrow performance conversation. It is increasingly a scaling conversation, where gain has to be judged together with latency, decoder burden, payload diversity, power consumption, and silicon cost. That makes simplistic “better code, better network” logic much less useful than it once was.

That matters because coding decisions sit at the intersection of theoretical efficiency and implementation reality. In a world of more diverse devices, broader service profiles, and tighter energy targets, the right coding path is not simply the one with the cleanest gain curve. It is the one that scales across payload sizes and latency requirements without dragging modem complexity, power draw, or architectural overhead in the wrong direction.

This is one reason the PHY conversation is becoming harder to simplify for a public audience. The most relevant design choices are increasingly multi-dimensional. A coding gain without a complexity story is incomplete. A waveform gain without a coverage story is incomplete. And a new air-interface mechanism that improves one layer while burdening the broader system may still lose once real engineering trade-offs are applied.

Duplexing is turning into a strategic 6G lever

Duplexing is another example of how quickly lower-layer ideas become system questions. 3GPP has already treated sub-band full duplex seriously enough to study it in Release 18 and then carry it further into Release 19 work. What makes that important is not just the concept itself, but the problem it tries to solve: one of the most persistent weak points in mobile systems, the uplink, without assuming an unrealistic full-duplex baseline.

That is a very 6G-type problem. The pressure is not just to make duplexing more elegant. It is to make the uplink more useful while still managing coexistence, interference, filtering, coordination, and deployment realism. That is why duplexing is becoming more strategic. It sits at the boundary between PHY evolution and practical network value.

Array scale is changing the geometry of the radio problem

One of the less publicized but increasingly important shifts in 6G is that antenna evolution is pushing parts of the radio problem beyond comfortable far-field assumptions. As array scale grows, beamforming can no longer be treated only as a matter of more elements and more precision. It becomes more geometric, more computational, and more sensitive to how deployment conditions are actually modeled.

That matters because near-field behavior changes more than beam control. It affects how coverage claims are interpreted, how system gains are compared, and how credible certain architectural assumptions remain as 6G moves into higher frequencies, denser topologies, and more ambitious array configurations. In that sense, array scale is not just another performance lever. It is becoming part of the methodological burden of serious 6G PHY work.

NTN is no longer an edge case in radio design

The same broadening is visible in the relationship between terrestrial and non-terrestrial design. For a long time, NTN could be treated as a specialized domain with its own assumptions and ecosystem logic. That separation is becoming harder to maintain. In early 6G thinking, terrestrial and non-terrestrial continuity is increasingly part of the mainstream design agenda rather than a peripheral extension.

This has direct PHY implications. Coverage behavior, mobility robustness, service continuity, timing assumptions, and usable uplink performance all look different once NTN is treated as part of the wider radio problem. The practical consequence is that 6G claims will increasingly be judged not only by how they perform in ideal terrestrial settings, but by whether they remain relevant across a broader and less forgiving connectivity landscape.

AI in the air interface is moving from concept to discipline

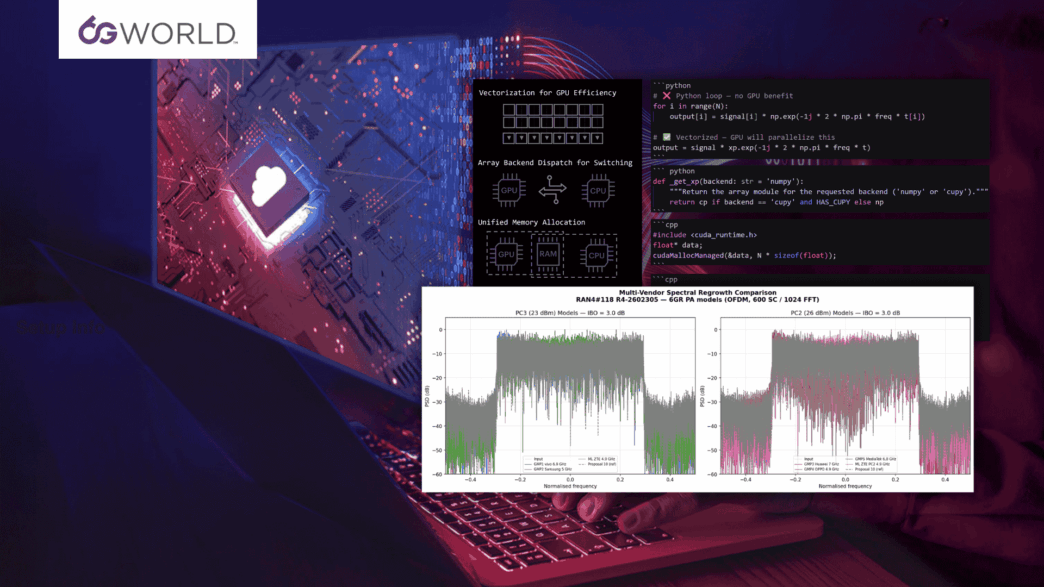

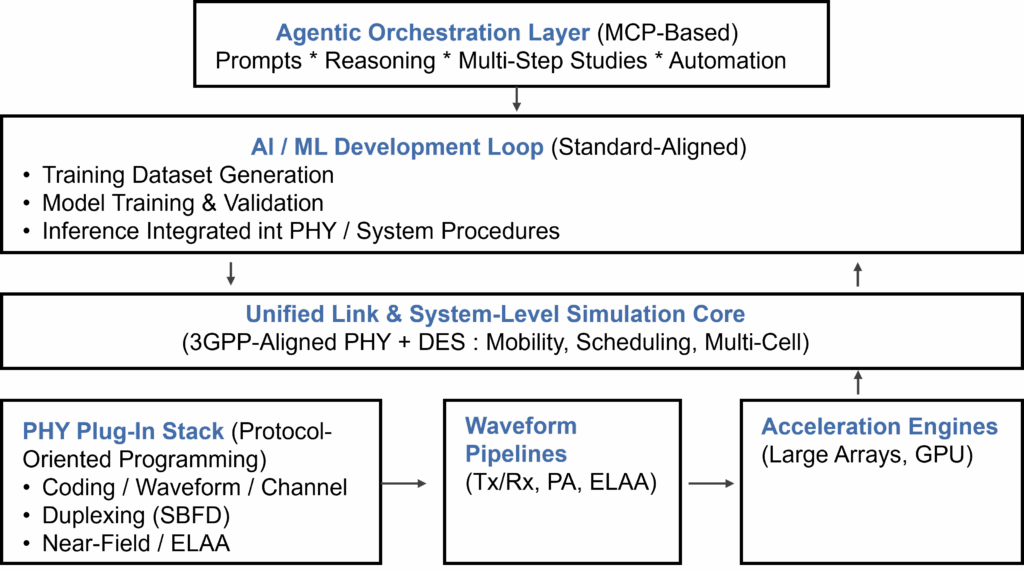

AI is where the 6G conversation can drift fastest into empty language, so it is worth being precise. The serious direction so far has been notably disciplined. AI is not most useful when it is described in sweeping terms. It becomes useful when it is applied to bounded procedures where prediction, overhead reduction, robustness, or adaptation can be improved under explicit assumptions.

That is the key distinction. The practical question is not whether AI sounds central to 6G. It is whether AI improves the radio enough to justify added complexity, data requirements, and systems overhead. That is why the stronger framing is not “AI everywhere,” but AI where it can be bounded, compared, and managed.

That cautious direction aligns with broader industry thinking. AI may well become central to 6G over time, but near-term credibility will depend less on how expansively it is described and more on where it delivers bounded value under explicit metrics, realistic channel assumptions, and system-level constraints.

Table 2. Where 6G PHY pressure is building

| Domain | What is changing | Why it matters |

| Waveforms | The direction is toward improvement with continuity, not novelty for its own sake | Coverage, uplink efficiency, PA behavior, and usable performance matter more than elegant but disruptive waveform change |

| Coding | Coding is increasingly judged as a performance-complexity problem, not just a gain problem | 6G must scale across very different devices, payload sizes, and energy constraints |

| Duplexing | SBFD has moved from concept toward serious standards work | Uplink bottlenecks, coexistence, interference handling, and deployment practicality are becoming strategic design questions |

| Massive MIMO / near-field | Array scale is pushing parts of 6G beyond comfortable far-field simplifications | The radio problem becomes more geometric, more computational, and more deployment-sensitive |

| AI-assisted procedures | AI is entering the air interface in tightly scoped functions such as CSI, beam management, positioning, and mobility | The bar is shifting from “AI sounds central” to “AI must deliver bounded value under explicit assumptions” |

| NTN-aware radio design | 6G is being shaped with stronger terrestrial and non-terrestrial continuity in mind | Coverage, resilience, and service continuity are becoming part of mainstream radio design rather than edge cases |

The 6G PHY debate is really about credibility under constraints

This is where the conversation comes back to engineering discipline. The important question for 6G PHY is no longer “what is possible in principle?” It is “what remains credible once performance, complexity, coverage, migration, NTN integration, and AI support are all considered together?”

Seen that way, 6G PHY is not a narrow technical layer waiting to be optimized. It is the place where many of the industry’s biggest choices are being forced into the open. Which mechanisms deliver enough gain to matter? Which ones scale? Which ones simplify the system instead of burdening it? Which ones fit a real migration path? And which ones can survive standardization, not just research enthusiasm?

That is why this layer matters so much. It is where ambitious ideas first run into engineering reality. And increasingly, it is where the market will begin to distinguish between concepts that are merely impressive and concepts that are robust enough to shape the actual 6G path.

Why this matters now

What the market needs now is not another generic “what to expect from 6G” explainer. It needs a clearer view of what the industry is actually trying to solve at the radio layer, and which kinds of claims are likely to survive the next phase of standardization and deployment reality.

That is what makes 6G PHY worth watching so closely. This is where some of the most consequential choices are now being forced into the open: which mechanisms deliver enough gain to matter, which ones scale without adding the wrong complexity, which ones support a credible migration path, and which ones are strong enough to survive not just research enthusiasm but engineering scrutiny.

The next phase of 6G will still depend on ambition. But it will increasingly reward teams that can connect ambition to evidence, and evidence to deployable system design. That is where the market will start separating ambitious narratives from engineering-grade progress.

About this article

This article was developed in collaboration with Keysight Technologies as part of a broader discussion on 6G PHY evolution, AI-assisted radio procedures, and the growing importance of engineering-grade evaluation.

Upcoming discussion

To go deeper into one important dimension of this topic, join 6GWorld and Keysight Technologies for the upcoming webinar: 3GPP AI Simulation for 5G-Advanced & 6G Research.

Register here