Why it matters

NVIDIA is trying to shift telecom’s role in the AI era from connectivity provider to distributed AI infrastructure provider. Its AI Grid vision describes a distributed, interconnected and orchestrated platform that links AI factories, regional hubs and edge sites so workloads can run where latency, cost and service requirements make the most sense.

That matters because it reframes the industry’s opportunity. Instead of only carrying AI traffic, operators are being told they can host, route and monetize AI inference closer to users, devices and machines. NVIDIA’s own framing is that AI-native services should run on infrastructure closest to the endpoint when strict SLAs, token economics and concurrency requirements matter.

The big picture

The GTC 2026 telecom story was bigger than AI-RAN. NVIDIA’s broader claim is that telecom and distributed cloud providers already operate a vast installed footprint, around 100,000 distributed network data centers worldwide, spanning regional hubs, mobile switching offices and central offices, with significant spare power that could be repurposed for AI capacity over time.

That is the strategic logic behind the AI Grid. If AI factories are the centralized engine of model training and large-scale inference, then the AI Grid is the distributed layer that puts intelligence closer to where it is used. NVIDIA’s AI Grid page explicitly frames this as a system that connects AI factories with regional hubs and edge sites into one unified platform.

What NVIDIA is actually saying

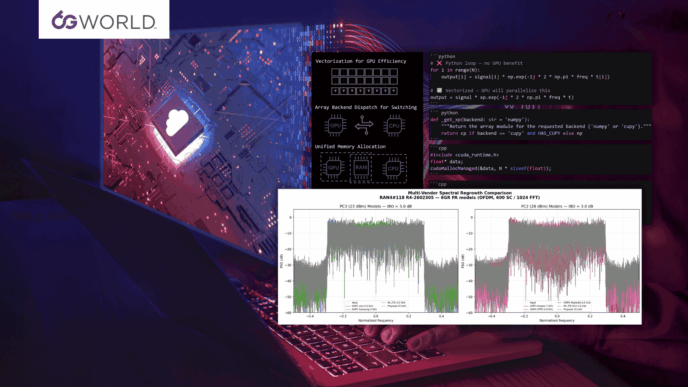

At the center of the pitch is a simple idea: not every AI workload belongs in the cloud. NVIDIA argues that real-time voice, vision, control and physical AI applications increasingly need predictable latency, lower network overhead, better cost per token and the ability to handle large numbers of concurrent sessions. Its AI Grid framework highlights four benefits in particular: predictable latency, better token economics, higher utilization and resilience, and concurrency at scale.

This is also why orchestration is so central to the story. Akamai, in launching what it calls the first global-scale implementation of NVIDIA AI Grid, said it is routing AI workloads across 4,400 edge locations and balancing them across edge, regional and core infrastructure based on latency, cost and performance. That is a good indication that AI Grid is not just a GPU placement story. It is a control plane story.

Between the lines

The real bet here is that telecom infrastructure becomes part of the AI stack. That is the deeper point Ronnie Vasishta has been making publicly: accelerated compute is allowing the telecom compute stack and the AI compute stack to converge in ways that were not practical in earlier virtualization waves.

NVIDIA’s official announcements support that direction. In its March 16 announcement with T-Mobile and Nokia, Jensen Huang said telecom networks are evolving into AI infrastructure and described the work with T-Mobile and Nokia as a blueprint for turning the 5G network into a distributed AI computer.

That is not a small rhetorical change. It suggests the network is no longer being positioned only as transport for AI workloads generated elsewhere. It is being positioned as part of the place where AI runs.

Where AI-RAN fits

AI-RAN is not the whole AI Grid story, but it is one of its most important on-ramps. NVIDIA’s March 16 announcement made clear that T-Mobile is using AI-RAN-ready infrastructure with Nokia software to support physical AI applications while continuing to deliver advanced 5G services.

That matters because AI-RAN is being pulled out of a narrow “AI for network optimization” box and placed into a much broader infrastructure discussion. In this framing, AI-RAN is not just about radio efficiency. It is about making parts of the mobile network available for shared AI and connectivity workloads, especially where ultra-low latency and proximity matter.

In other words: AI-RAN is becoming part of the commercial logic for distributed inference, not just the engineering logic for better radio performance.

Why 6G enters the picture

This is where the AI Grid narrative becomes directly relevant to 6G. On February 28 at Mobile World Congress, NVIDIA and a coalition including BT, Cisco, Deutsche Telekom, Ericsson, MITRE, Nokia, SK Telecom, SoftBank and T-Mobile announced a commitment to build 6G on AI-native, open, secure and trustworthy platforms. NVIDIA said 6G must embed AI across the RAN, edge and core, support integrated sensing and communications, and evolve through software-defined platforms.

That gives the AI Grid a bigger role than just “distributed edge AI.” It starts to look like the operational environment NVIDIA believes future 6G systems will depend on: distributed compute, software-defined orchestration, AI-native control layers and closer coupling between sensing, inference and wireless infrastructure. That is an interpretation, but it is strongly aligned with NVIDIA’s published 6G and AI infrastructure messaging.

What makes this different from old edge computing talk

Edge computing has been discussed in telecom for years, often with disappointing commercial results. What makes the AI Grid pitch more serious is that it is tied to a new economics model.

NVIDIA is not just arguing for processing closer to the edge. It is arguing that the value of AI services increasingly depends on things like time to first token, tokens per second, quality of experience, and latency-aware routing across distributed infrastructure. Its public materials explicitly frame AI Grid benefits in terms of token economics and workload placement efficiency, not just generic “lower latency.”

That is a much more specific argument than the old MEC story. It gives operators, cable players and distributed cloud providers a more concrete way to think about monetization.

Early proof points

The partner examples NVIDIA highlighted around GTC help show the shape of the thesis, even if they do not yet prove the whole model at industry scale.

Akamai says it is implementing NVIDIA AI Grid orchestration across 4,400 edge locations to intelligently route AI inference workloads.

Comcast said it is working with NVIDIA to bring AI processing closer to customers at the network edge to support next-generation applications.

T-Mobile and Nokia are presenting AI-RAN-ready infrastructure as a platform for physical AI applications at the network edge.

Taken together, these moves suggest the market is starting to test distributed AI delivery through very different infrastructure footprints: telco mobile networks, cable edge networks and global distributed cloud platforms.

The skepticism is still valid

The AI Grid thesis is compelling, but it is still ahead of broad proof in several areas.

There is still limited public evidence on the hardest questions: real utilization levels, power and thermal constraints at distributed sites, cost of orchestration, enterprise demand consistency, and whether distributed inference economics beat centralized cloud economics outside selected use cases. NVIDIA and its partners have shown credible momentum, but the industry still lacks enough transparent deployment data to call this settled.

There is also a strategic tension for operators. AI Grid sounds like a telecom opportunity, but it may also shift more control toward whoever owns the accelerated compute stack, orchestration layer, software frameworks and developer ecosystem. That is not a contradiction. It is the core strategic question.

What to watch

The next thing to watch is not another slogan. It is evidence.

Watch for more operator disclosures on how AI workloads are actually being split across centralized AI factories, regional hubs and distributed sites. Watch for harder numbers on token economics, latency performance, concurrency and commercial utilization. And watch whether AI-RAN moves from pilot narrative to repeatable infrastructure model tied to real service revenue.

If that happens, GTC 2026 may be remembered as the moment NVIDIA stopped talking about telecom as a vertical and started talking about it as AI infrastructure everywhere. And if that framing holds, then AI Grid may turn out to be one of the more important conceptual bridges between today’s 5G networks and tomorrow’s AI-native 6G systems.